We talk a lot about compute power in AI.

We talk a lot about compute power in AI.

How many GPUs. How many data centres. How much memory. And all of that matters, enormously.

But there’s something else in the AI race that doesn’t get nearly enough attention. In fact, I think it’s the single most important factor in determining which companies — and which investors — win from here.

Speed.

Not just processing speed. Not just clock speed.

The speed at which an AI model can think, respond, and act.

I’ve written to you before about this, but it’s critical to understand: when it comes to inference, speed matters most.

The speed at which data moves from memory to compute and back again. The speed at which intelligence is delivered to the real world. That speed (or latency) can be the difference between a successful AI strategy and a failed one.

Because in a world where AI is moving from training to inference, from learning to doing, speed is the lifeblood of AI.

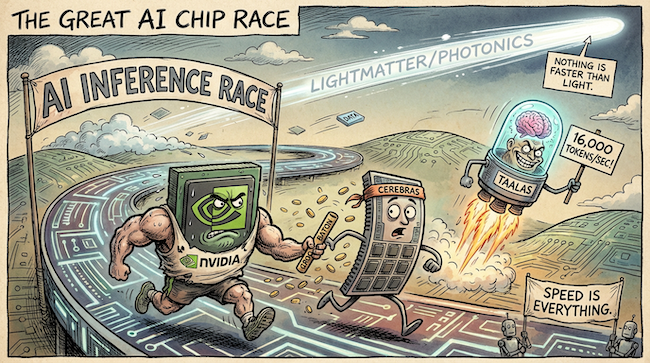

The inference revolution

Just a week ago, Nvidia reportedly committed around US$20 billion in a major deal involving Groq, one of the leading inference chip designers. While Groq remains an independent company, it’s widely believed Nvidia paid a significant premium to secure access to its technology and prevent it from leapfrogging their own roadmap. Call it an IP land grab.

But it was proof that the smartest company in AI sees what’s coming.

Training teaches the model. Inference is where it thinks, responds, and acts. Every query, every autonomous decision, every real-time interaction is an inference event.

And the best inference demands speed above all else.

Groq understood this from day one. Its Language Processing Unit places compute and memory on the same chip, eliminating the bottleneck that even Nvidia’s best GPUs struggle with. That’s why Nvidia was willing to pay so much to bring Groq inside the tent.

Then there’s Cerebras — another company I flagged to you last October.

Its wafer-scale engine is delivering over 2,000 tokens per second on large language models. That’s significantly faster than most GPU-based clouds, which typically operate between 30 and 100 tokens per second.

Cerebras has since demonstrated that even frontier-scale 405-billion-parameter models can run at nearly 1,000 tokens per second on its hardware. Expect an IPO from the company this year.

And now, this week, a new entrant just blew the doors off those numbers even further.

Taalas, a Toronto-based start-up that just raised US$169 million, has taken a radically different approach. Instead of building a general-purpose chip and loading a model onto it, it’s hardwired the AI model directly into the silicon.

The model becomes the processor.

The result? Over 16,000 tokens per second.

That’s 73 times faster than Nvidia’s H200 — using a fraction of the power.

No high-bandwidth memory needed. No liquid cooling. No exotic packaging. Just raw speed from a chip that does one thing and does it absurdly well.

There is, of course, a trade-off — for now. Each chip runs a single, specific model.

Given how quickly models evolve, a custom chip can be two or three generations behind by the time it’s ready. But Taalas claims it can move from model weights to finished silicon in roughly two months.

If we get to a point where AI models begin to standardise and slow down that development timeline, then maybe this approach could split the entire chip market in two: flexible GPUs for training, and hardwired ASICs for inference at scale.

Nothing is faster than light

Here’s where it becomes especially interesting for investors.

Nvidia has effectively absorbed Groq. Cerebras is still private. Taalas is a start-up.

Furthermore, they’re all still using electrons. And electricity, no matter how fast, has a ceiling. It generates heat and consumes a lot of power.

Which brings us to photonics.

I first wrote to you about Lightmatter back in October, when I identified the company as one of the next wave of AI infrastructure stars. Since then, its trajectory has only accelerated.

Lightmatter’s Passage platform uses light instead of electrons to move data between AI chips, delivering bandwidth that dwarfs what copper can handle.

Already with a US$4.4 billion valuation and more innovation ready to deploy this year, Lightmatter is putting photonics at the top of the pile as the next big leap in AI infrastructure.

And Lightmatter is not alone.

There are publicly listed companies — investable today — with significant exposure to this shift. Some sit in critical positions within the optical ecosystem. And I believe one, in particular, stands above the rest.

Earlier this year I told you to follow the memory. Then I said to follow the storage. Those have been ideal, and for smart investors, hugely profitable.

The next layer of this is speed.

Memory gives AI the capacity to think.

Storage gives AI the ability to remember.

Speed determines how fast it all happens.

And in a world where real-time inference powers everything from autonomous vehicles to AI agents to robotics, speed isn’t a nice-to-have.

It’s the entire game.

Remember: speed is everything.

And nothing is faster than light.

Until next time,

Sam Volkering

Investment Director, Southbank Investment Research

PS In the piece above, I explained why inference speed — not just compute — is becoming the real battlefield in AI.

Groq. Cerebras. Taalas. All chasing faster inference.

But the next true leap in speed won’t come from more electrons.

It comes from light.

And right now, a small publicly listed company sits in a critical position within that photonics layer, the same layer Nvidia must strengthen if it wants to keep dominating the AI stack.

A fresh signal inside our system is pointing directly at it.

If Nvidia makes a move to secure this capability, the repricing could be swift.

We’ve detailed the company, the technology, and why the timing matters now.

Have a look before speed — once again — leaves most investors behind.