I weirdly like it. Also, I like to think I’m pretty good at cutting through the corporate noise.

I weirdly like it. Also, I like to think I’m pretty good at cutting through the corporate noise.

Most are forgettable and don’t really tell us anything we don’t already know.

But just sometimes…

About two hours after it was posted on Amazon’s site, I read the annual letter from Amazon CEO Andy Jassy.

I’ve gone through it three times now. I’ve shared it with most of my trusted circle — even those who don’t really care about tech.

Why?

Because this is the most aggressively bullish statement on the direction of AI infrastructure (and Amazon) I’ve come across. Full stop.

Not even what Jensen Huang (Nvidia CEO) has said in the past has me this excited about AI.

One line in particular really gave me goosebumps. In fact, I even highlighted it and sent it directly to my brother with the message: “Whatever you’re doing, stop and read this.”

“We’re not investing approximately $200 billion in capex in 2026 on a hunch.”

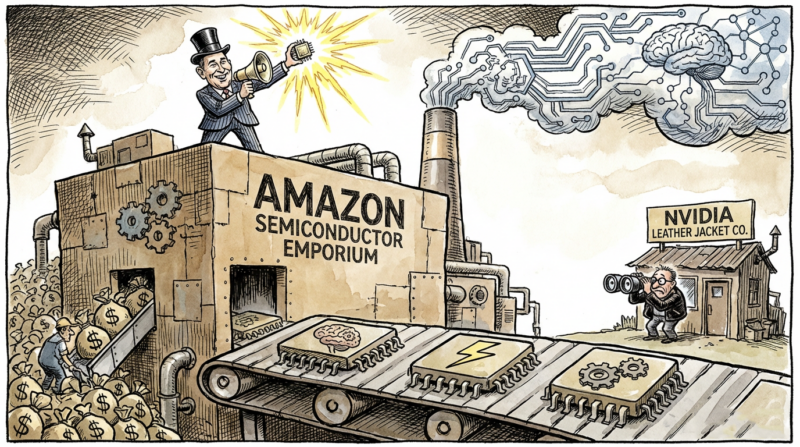

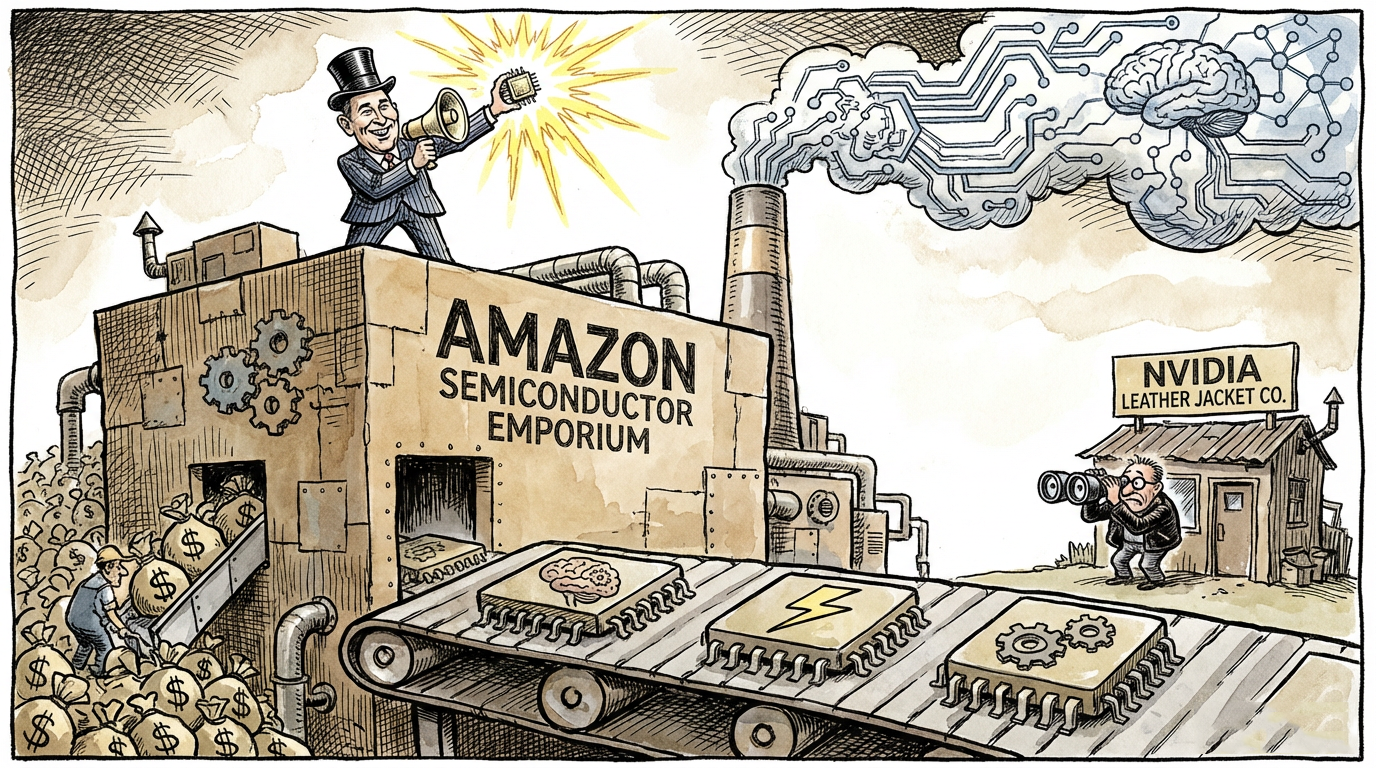

That is a staggering statement. We know they’re spending big on capex, but he’s directly saying here that this isn’t fun and games. They know what’s coming. And the numbers he wrote are frankly worrying for the world’s biggest chip company.

A $1.25 trillion chip company hiding inside Amazon

Jassy revealed that Amazon’s custom chip business — Trainium, Graviton, and Nitro combined — is already running at over US$20 billion in annual revenue.

That alone is a serious number. But then he dropped something wild.

If that chip division were a standalone business, selling to AWS and third parties the way Nvidia or AMD do, its effective annual run rate would be roughly US$50 billion.

Fifty. Billion. Dollars.

To give you some further context, Nvidia trades at around 25 times price-to-sales. That would imply there’s a $1.25 trillion chip business inside Amazon.

If your jaw hasn’t hit the floor yet, then maybe this game isn’t for you.

That would make Amazon’s chip business one of the largest semiconductor companies in the world. And it’s just there, sitting inside Amazon, and no one knew about it.

The demand for its chips is mind-boggling.

Trainium2 offered about 30% better price-performance than comparable GPUs. It’s sold out.

Trainium3 shipped early this year with another 30% to 40% improvement. Nearly fully sold out.

Trainium4 is 18 months away and already has significant capacity reserved. It’ll be sold out soon enough.

And the economics are bonkers.

At scale, Jassy expects Trainium to save AWS tens of billions in capex per year and deliver several hundred basis points of operating margin advantage versus relying on Nvidia for inference.

I’ll be blunt.

I think Amazon’s chip business will kill Nvidia’s dominance, particularly on the inference side.

And I think it’s so big, growing so fast, and so important to Amazon, it’llprobably spin this out as a standalone company.

That would mean (likely) that existing Amazon stockholders probably end up owning the chip company too if they do spin it out. That’s speculation on my part, but it would make strategic sense.

What Amazon is doing now with its inference chips and systems isn’t all that different to what it’s been doing with its CPUs against Intel.

Today, 98% of the top 1,000 EC2 customers use Graviton. Intel never saw it coming.

And the demand for Gravitron, Jassy said, is so big that “two large AWS customers have already asked if they could buy *all* of our Graviton instance capacity in 2026.”

I suspect Nvidia is watching what’s happening on inference very carefully.

Cerebras + Trainium is the $10 trillion play

It’s also worth noting that this letter comes only weeks after Amazon and AWS announced a tie-up with inference chip maker, Cerebras.

I wrote about Cerebras in October last year and, more recently, in February this year.

Well, in March, AWS announced a collaboration to deploy Cerebras CS-3 systems inside AWS data centres, available through Amazon Bedrock.

Trainium handles the compute-heavy “prefill” stage, processing the incoming prompt. Then the workload hands off to Cerebras for the “decode” stage, where the actual output tokens are generated at blistering speed. AWS called it an order of magnitude faster than anything available today.

Cerebras recently did a funding round valuing it at $23 billion. That was before the Amazon deal. It is said to be on the IPO trail now, aiming to hit the market this year.

There’s a strong chance that either happens soon with Amazon as a key investor — or that Amazon acquires Cerebras outright before listing (which I wrote to you about in January).

I can see Amazon buying Cerebras before its IPO.

It would make sense for Amazon, it adds another layer to an incredible strategy that’s paying off big time…Trainium for cost-efficient training and inference, Graviton for general compute, Cerebras for ultra-fast decode.

What this also forces me to consider is the question: Should you load up on Amazon even if it’s just in order to hedge an Nvidia position?

What Jassy outlined is not that of a company content being Nvidia’s biggest customer and relying on it for its future.

Amazon is a self-sufficient company methodically building its own infrastructure to reduce that dependency, year by year, chip by chip.

AWS AI revenue exceeded a US$15 billion run rate in Q1 2026, and it’s climbing fast.

The chips business could be a standalone titan. The capex is astronomical and not just on “a hunch”.

Mix in Cerebras with an inference speed advantage nobody else in the hyperscaler world can match right now, and maybe Amazon becomes the world’s first $10 trillion company before anyone else.

Until next time,

Sam Volkering

Investment Director, Southbank Investment Research

PS Amazon isn’t spending $200 billion on AI infrastructure on a guess. They have visibility most investors don’t.

The problem is, you don’t.

And in a market driven by headlines, geopolitics, and sudden shifts in sentiment, that gap creates hesitation — the kind that leads to missed opportunities or bad decisions.

That’s exactly why we’re working to bring you a better way. A system designed to help you act with clarity, even when markets don’t make sense.

I’ll walk through it step by step in this private briefing. Save your seat here.